The Pitfall of Backtesting: Overfitting in Algorithmic Trading

Understanding and trying to solve the hard problem of overfitted trading strategies

5/19/20233 min read

Understanding Backtesting

Backtesting is the practice of evaluating a trading strategy using historical market data. It allows us to gauge the viability of a trading strategy before deploying it in the real market. Without backtesting, traders would be essentially navigating blind, exposing themselves to unnecessary risks.

There are many platforms for backtesting, each with its unique strengths. For Forex trading, cTrader stands out because it offers comprehensive Forex data, the possibility of live trading, and the ability to backtest using tick data

Overfitting and its Dangers

While backtesting is an essential step in developing a trading strategy, it's not without pitfalls. The main one is the risk of overfitting.

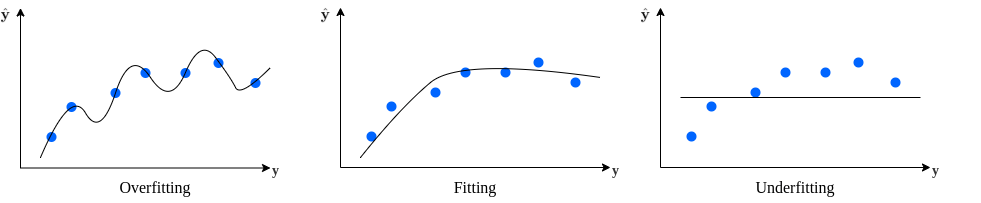

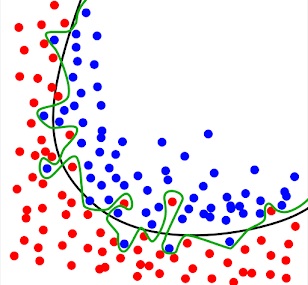

Overfitting is when a trading strategy is too finely tuned to historical data, effectively fitting to the noise rather than the underlying signal. This is akin to crafting a key (the trading strategy) to fit a specific lock (the historical data) so intricately that it fails to open any other lock (new market data).

An overfitted strategy may excel in backtesting but performs poorly in live trading, because it's too rigid and fails to adapt to changing market conditions.

How to Avoid Overfitting

Here are some key steps to avoid overfitting:

Keep it Simple: The first step in avoiding overfitting is simplicity. The more complex a system, the more likely it is to overfit. Use as few parameters as possible, and try to keep your strategy based on a sound, simple premise.

Parameters Parity: Link parameters together and optimize them as a group rather than individually. This approach reduces the risk of overfitting because it constrains the system's flexibility. For instance, in a two Moving Average Crossover strategy, the value of the second moving average could be set to be four times the value of the first moving average.

Avoid Over-Optimization: Over-optimization is a sure way to overfitting. While it's tempting to squeeze every bit of performance out of a strategy by fine-tuning its parameters, this could lead to a strategy that's too closely fitted to historical data and likely to fail with new data.

Use In-Sample and Out-of-Sample Data: In-sample data is used for developing and optimizing the strategy, while out-of-sample data is used for validating it. This approach ensures your strategy is not just fitted to a specific dataset and can perform well with new data.

Monte Carlo Simulations: These simulations use repeated random sampling to estimate the probability of certain outcomes, providing a range of possible results rather than a single backtest figure. This can help assess the robustness of a strategy.

Identifying Overfitting

Here are some indications that a strategy might be overfitted:

Market and Time Frame Specific: If a strategy only works on a single market or a few time frames, it's likely overfitted. A robust strategy should perform reasonably well across different markets and time frames.

Sensitivity to Parameter Changes: Overfitted strategies are often overly sensitive to parameter changes. If small changes to the parameters significantly affect the strategy's profitability, it's likely overfitted.

Out-of-Sample Testing: A significant decrease in performance in the out-of-sample data compared to the training data may suggest overfitting.

Cross-Validation: This is another form of out-of-sample testing where the data set is divided into several parts (folds). The strategy is trained on a portion of the data (for instance, k-1 folds if you have k folds in total) and then tested on the remaining fold. This process is repeated for all folds. If the performance varies significantly across different folds, the strategy might be overfitted.

Walk-Forward Optimization: In this method, the strategy is optimized on a certain period, and then those parameters are tested on the next period. This process is repeated multiple times, moving forward in time. If the performance significantly deteriorates in the walk-forward (out-of-sample) periods, this could indicate overfitting.

In conclusion, identifying overfitting in trading strategies involves rigorous testing and validation methodologies. If a strategy's performance significantly deteriorates when tested on new data or under slightly different conditions, it may be overfitted to the data it was trained on. It's also crucial to note that the optimization and validation process should align with the trader's risk tolerance, trading style, and the nature of the markets they're involved in.